This AI-Based Anti-Loitering System For Storefronts Doesn't Seem Like Big Brother At All

A company called Interface Security Systems has announced via press release that it has developed an AI-based anti-loitering system designed to "prevent intrusions before they happen." This system uses artificial intelligence to detect people or vehicles that are hanging around the storefront too long, then triggers "pre-recorded, scenario-specific voice recordings to deter loitering." This sounds like it'll go over well.

More from the press release, shared by QSR Magazine:

"This system addresses the unique challenges of deterring individuals from loitering on company property after hours," says Tom Hesterman, SVP of Products and Solutions. "Our anti-loitering system also discourages people from dumping items or "casing" the property, leaving no doubt that the security system is not only functional but very responsive and sophisticated. Simple motion control is prone to false triggers when used outside. By utilizing an intelligent IP camera that can detect people or vehicles, we eliminate false alarms and greatly enhance the effectiveness of the system."

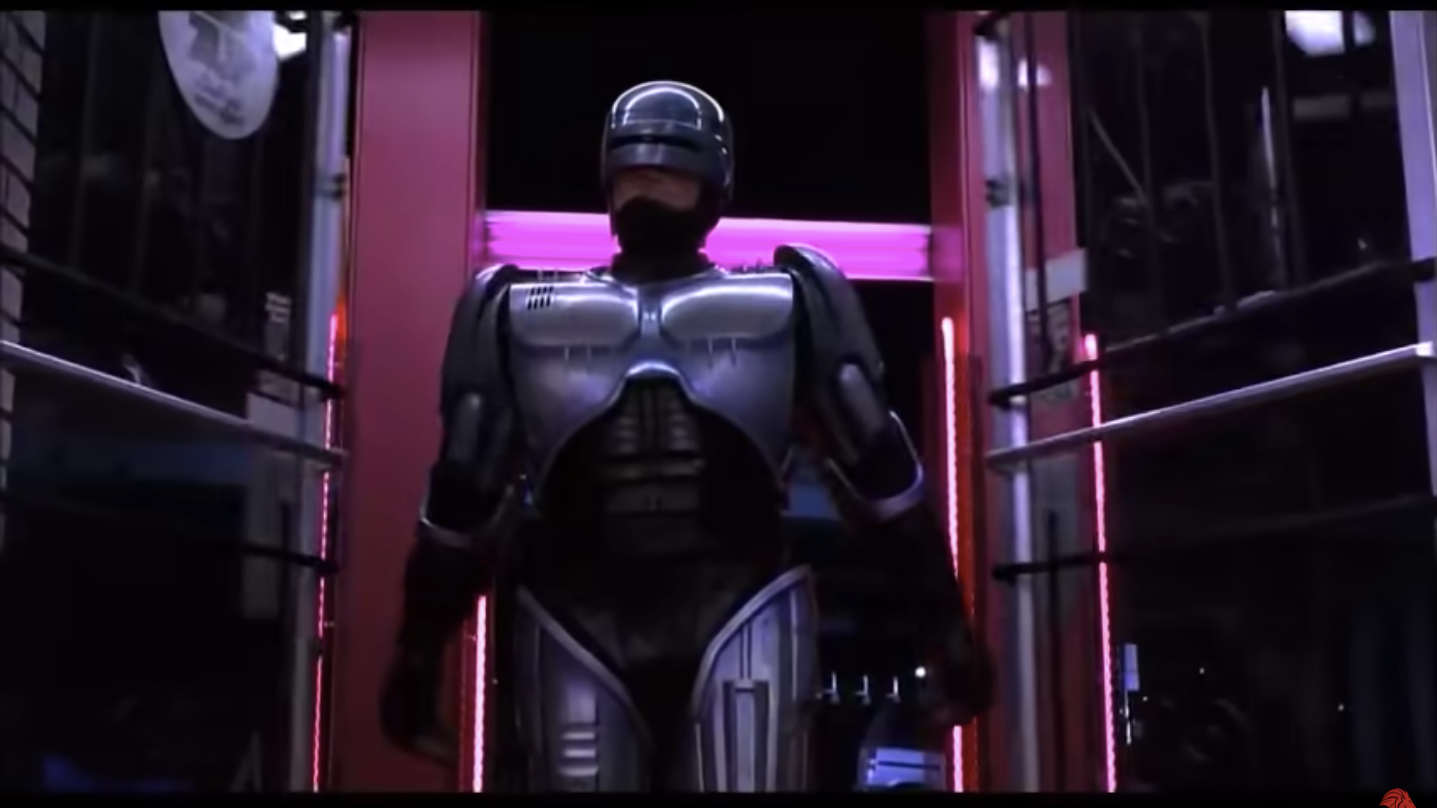

Not only does this system sound like a baby version of Minority Report, it also sounds like a slightly upgraded (yet still shitty) version of that Viper car alarm that shouts at you if you get too close to the car.

Designed to tackle after-hours perimeter control challenges such as vagrancy, intrusion, and vandalism, the Anti-Loitering System can be customized by loss prevention teams to play different warning and notification messages depending on location and dwell time. The messages played can be calibrated to first inform or educate the person before issuing a warning.

So basically, it sounds like you might be able to blast "Stay away from my shop, you lousy kids!" or "Hey you, you've been rummaging in our dumpster for two months straight now!" Instead of just yelling these things, you can pay to have an expensive machine do it for you.

The Interface Security Systems website also states, "The system can be integrated with lighting, alarm systems or can be configured to alert our remote security professionals if the subject being monitored refuses to comply with audio instructions." Hopefully they send the world's greatest security officer, Paul Blart.

Personally, this seems needlessly intrusive to me, and I get the impression that this system is susceptible to all the same human biases and creeps that it's trying to out-maneuver, since there's still the "remote operator" aspect of it all. Plus, you know what? Artificial intelligence is still prone to making mistakes, and lots of them. The fact that this is also targeting vagrancy (their words, not mine) reeks of blatant discrimination. What do you think? Is there any reason to believe that an AI-based system built to monitor whether you're outside a store for longer than 10 minutes is a good idea?